About six months ago, I was reading up on music creation and the program Max/MSP, a graphical workflow environment for creating and manipulating audio and video. Very complex, but powerful stuff. The author of Max/MSP, Miller S. Puckette, later created a piece of similar, open-source software called Pure Data (pd). Pure Data is similar to Max/MSP except that it’s free for anyone to use and make stuff with.

In this post, we’ll go over what exactly I managed to do with PD. It involved using an M-Audio MIDI controller to manipulate videos in a real-time graphical environment. Sound too complicated or scary for you? It’s really not. C’mon, I’ll show you how it works.

So first off, this isn’t a tutorial. That will come next week as it’s simply too much to cover in one post. This is merely an explanation of what I’ve created and what was used to do it. To understand this piece, you should have a basic understanding of how PD and GEM work. You can read more about them here.

I work with audio and video a lot and tend to make some pretty unique creations in music. I wanted to give my music some video to match it – all crazy-like and tripped out. I also wanted the ability to edit effects in real time. Pure Data seemed like the perfect way of achieving this, so I set out on creating a patch.

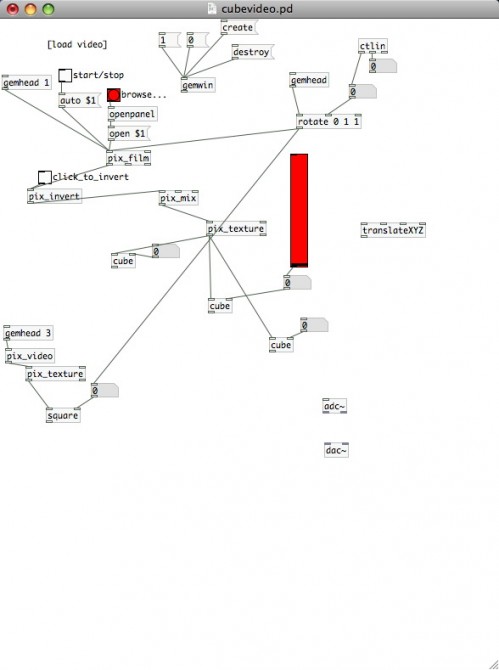

The patch! Cubevideo.pd

The image we see above is the patch I currently have running, cubevideo.pd. From the top down, we can see buttons that start and stop video playback, buttons for loading in video files, buttons for inverting the video color and some other text. There’s also a red vertical slider. This controls the size of a cube we’ve rendered. The cube displays the video by rendering the video we choose via texture maps.

There’s a few cubes here. This is to create multiple effects. You’ll also see a third cube down on the left that uses the [pix_video] object to import live video from my iSight.

Additionally, we see the [ctlin] object here and there. This allows us to route MIDI signals from out external controller (in this case, an M-Audio Torq Xponent) into PD where they can be used to control specific values.

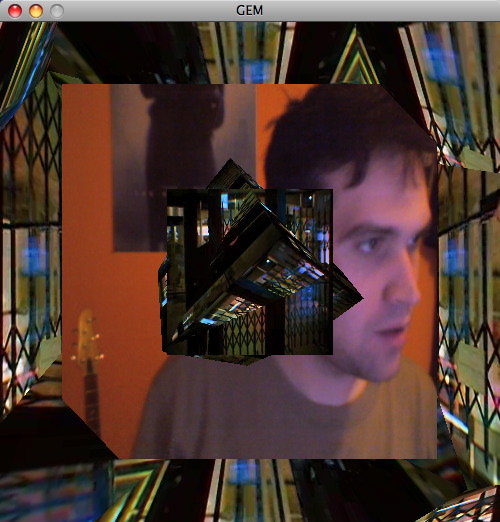

So what happens when we render a window in GEM and load up a few videos?

Manipulating video in real-time

Holy smokes, Batman! Just how much LSD did you drop?

None! This is all being done in realtime via a PD library called GEM. GEM allows image and video manipulation, thus allowing you to expand your creativity into realms never realized.

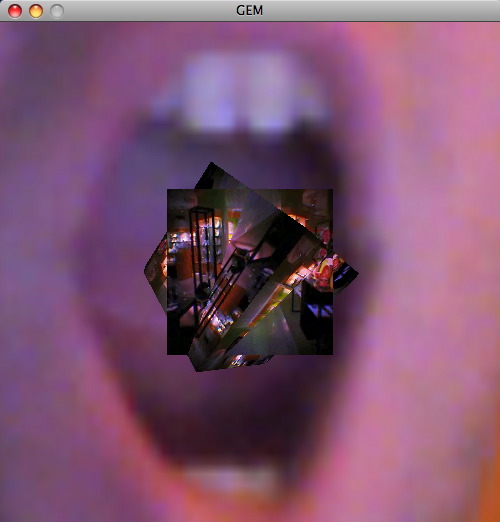

If we adjust the vertical slider some and change the numerical value of the third cube’s size, we end up with this:

Is that a mouth or are you just happy to see me?

So essentially, the limits are endless. If we want to invert our iSight video, we simply link up a [pix_invert] object and link everything together correctly. Sick of cubes? Add some cones or triangles to mix it up a little.

Here is a video I recorded of Pure Data. It shows me manipulating a Rhianna video in real-time using my MIDI controller. It’s very cool-looking, but really just serves as a working example. There is no audio, FYI. I did this to save bandwidth/filesize. Put on your own Rhianna CD if it feels incomplete.

Remixing Rhianna with Pure Data from Vincent Veneziani on Vimeo

This patch is still a work in progress. I’m adding audio integration to the videos and a few other tricks I don’t want to reveal yet. What I will reveal, however, is my source code. If you’d like to give my cubevideo.pd patch a try, you can download it here. Make sure you have GEM and the latest version of Pure Data installed. On OS X, which is what I use, GEM is installed by default with Pure Data.

People might ask: what’s the point of all this? There is none. This is merely an experiment in computing and art. Manipulating video with knobs and buttons is interesting to me and drives me to experiment.

If you want to dive further into Pure Data, I’d recommend downloading tutorials and examples that help you understand it better. Through trial and error, you’ll end up creating amazing things that will surprise many. Next time, we’ll discuss audio manipulation with a Playstation controller.

Gearfuse Technology, Science, Culture & More

Gearfuse Technology, Science, Culture & More

Hi, I’m a beginner in PD, I’ve been wanting to start playing with GEM and this seems like the perfect patch to do it, it’s very kind of you to share your source code but unfortunately I can’t download it from the link you provided. Maybe there’s some mistake?

Thanks, I’m looking forward to reading your next post on the subject.

Is it me or is the link not working…. Shame I love your work..

Hey thanks for the heads up. Ill reupload the tutorial files today.

great work. thanks for sharing!

the link still isn’t working, i’d love to experiment with your patch!

Brilliant!

But yes, the patch has probably expired on that server.

How about posting it up on the forum at http://puredata.hurleur.com/

It is bound to be thoroughly appreciated.

BTW ~ what’s an iSight video?

Thank you, and keep well ~ Shankar